Apache Cassandra® 5.0: Improving performance with Unified Compaction Strategy

IntroductionUnified Compaction Strategy (UCS), introduced in Apache Cassandra 5.0, is a versatile compaction framework that not only unifies the benefits of Size-Tiered (STCS) and Leveled (LCS) Compaction Strategies, but also introduces new capabilities like shard parallelism, density-aware SSTable organization, and safer incremental compaction, all of which deliver more predictable performance at scale. By utilizing a flexible scaling model, UCS allows operators to tune compaction behavior to match evolving workloads, spanning from write-heavy to read-heavy, without requiring disruptive strategy migrations in most cases.

In the past, operators had to choose between rigid strategies and accept significant trade-offs. UCS changes this paradigm, allowing the system to efficiently adapt to changing workloads with tuneable configurations that can be altered mid-flight and even applied differently across different compaction levels based on data density.

Why compaction mattersCompaction is the critical process that determines a cluster’s long-term health and cost-efficiency. When executed correctly, it produces denser nodes with highly organized SSTables, allowing each server to store more data without sacrificing speed. This efficiency translates to a smaller infrastructure footprint, which can lower cloud costs and resource usage.

Conversely, inefficient compaction is a primary driver of performance degradation. Poorly managed SSTables lead to fragmented data, forcing the system to work harder for every request. This overhead consumes excessive CPU and I/O, often forcing teams to try adding more nodes (horizontal scale) just to keep up with background maintenance noise.

Key concepts and terminologyTo understand how UCS optimizes a cluster, it is necessary to understand the fundamental trade-offs it balances:

- Read amplification: Occurs when the database must consult multiple SSTables to answer a single query. High read amplification acts as a “latency tax,” forcing extra I/O to reconcile data fragments.

- Write amplification: A metric that quantifies the overhead of background processes (such as compactions). It represents the ratio between total data written to disk and the amount of data originally sent by an application. High write amplification wears out SSDs and steals throughput.

- Space amplification: The ratio of disk space used to the actual size of the “live” data. It tracks data such as tombstones or overwritten rows that haven’t been purged yet.

- Fan factor: The “growth dial” for the cluster data hierarchy. It defines how many files of a similar size must accumulate before they are merged into a larger tier.

- Sharding: UCS splits data into smaller, independent token ranges (shards), allowing the system to run multiple compactions in parallel across CPU cores.

UCS provides baseline architectural improvements that were not available in older strategies:

Improved compaction parallelismOlder strategies often got stuck on a single thread during large merges. UCS sharding allows a server to use its full processing power. This significantly reduces the likelihood of compaction storms and keeps tail latencies (p99) predictable.

Reduced disk space amplificationBecause UCS operates on smaller shards, it doesn’t need to double the entire disk space of a node to perform a major merge. This greatly reduces the risk of nodes from running out of space during heavy maintenance cycles.

Density-based SSTable organizationUCS measures SSTables by density (token range coverage). This mitigates the huge SSTable problem where a single massive file becomes too large to compact, hindering read performance indefinitely.

Scaling parameterThe scaling parameter (denoted as W) is a configurable setting that determines the size ratio between compaction tiers. It helps balance write amplification and read performance by controlling how much data is rewritten during compaction operations. A lower scaling parameter value results in more frequent, smaller compactions, whereas a higher value leads to larger compaction groups.

The strategy engine: tuning and parametersUCS acts as a strategy engine by adjusting the scaling parameter (W), allowing UCS to mimic, or outperform, its predecessors STCS and LCS.

At a high level, the scaling parameter influences the effective fan-out behavior at each compaction level. Tiered-style settings such as T4 allow more SSTables to accumulate before merging, favoring write efficiency, while leveled-style settings such as L10 keep SSTables more tightly organized, reducing read amplification at the cost of additional background work.

The numbers below are illustrative and not prescriptive:

UCS configuration guide Workload type Strategy target Scaling (W) Primary benefit Heavy writes / IoT STCS (Tiered) Negative (e.g., -4) Lowest read amplification Heavy reads LCS (Leveled) Positive (e.g., 10) Lowest write amplification Balanced Hybrid Zero (0) Balanced performance for general apps Practical exampleUCS allows operators to mix behaviors across the data lifecycle.

'scaling_parameters': 'T4, T4, L10'

Note that scaling_parameters takes a string format that can accommodate parameters for per-level tuning.

This example instructs a cluster: “Use tiered compaction for the first two levels to keep up with the high write volume, but once data reaches the third level, reorganize it into a leveled structure so reads stay fast.”

Here’s a fuller, illustrative example of how one might structure their CQL to change the compaction strategy.

ALTER TABLE keyspace_name.table_name WITH compaction = { 'class': 'UnifiedCompactionStrategy', 'scaling_parameters': 'T4,T4,L10' };

Operational evolution: moving beyond major compactions

In older strategies and in Apache Cassandra versions prior to 5.0, operators often felt forced to run a major compaction to reclaim disk space or fix performance. This was a critical event that could impact a node’s I/O for extended periods of time and required substantial free disk space to complete.

Because UCS is density-aware and sharded, it effectively performs compactions constantly and granularly so major compactions are rarely needed. It identifies overlapping data within specific token ranges (shards) and cleans them up incrementally. Operators no longer must choose between a fragmented disk and a risky, resource-heavy manual compaction; UCS keeps data density more uniform across the cluster over time.

The migration advantage: “in-place” adoptionOne of the key performance features of a UCS migration is in-place adoption, meaning that when a table is switched to UCS, it does not immediately force a massive data rewrite. Instead, it looks at the existing SSTables, calculates their density, and maps them into its new sharding structure.

This allows for moving from STCS or LCS to UCS with significantly less I/O overhead than any other strategy change.

ConclusionUCS is an operational shift toward simplicity and predictability. By removing the need to choose between compaction trade-offs, UCS allows organizations to scale with confidence. Whether handling a massive influx of IoT data or serving high-speed user profiles, UCS helps clusters remain performant, cost-effective, and ready for the future.

On a newly deployed NetApp Instaclustr Apache Cassandra 5 cluster, UCS is already the default strategy (while Apache Cassandra 5.0 has STCS set as the default).

Ready to experience this new level of Cassandra performance for yourself? Try it with a free 30-day trial today!

The post Apache Cassandra® 5.0: Improving performance with Unified Compaction Strategy appeared first on Instaclustr.

Exploring the key features of Cassandra® 5.0

Apache Cassandra has become one of the most broadly adopted distributed databases for large-scale, highly available applications since its launch as an open source project in 2008. The 5.0 release in September 2024 represents the most substantial advancement to the project since 4.0 released in July 2021. Multiple customers (and our own internal Cassandra use case) have now been happily running on Cassandra 5 for up to 12 months so we thought the time was right to explore the key features they are leveraging to power their modern applications.

An overview of new features in Apache Cassandra 5.0Apache Cassandra 5.0 introduces core capabilities aimed at AI-driven systems, low-latency analytical workloads, and environments that blend operational and analytical processing.

Highlights include:

- The new vector data type and an Approximate Nearest Neighbor (ANN) index based on Hierarchical Navigable Small World (HNSW), which is integrated into the Storage-Attached Index (SAI) architecture

- Trie-based memtables and the Big Trie-Index (BTI) SSTable format, delivering better memory efficiency and more consistent write performance

- The Unified Compaction Strategy, a tunable density-based approach that can align with leveled or tiered compaction patterns.

Additional enhancements include expanded mathematical CQL functions, dynamic data masking, and experimental support for Java 17.

At NetApp, Apache Cassandra 5.0 is fully supported, and we are actively assisting customers as they transition from 4.x.

A deeper look at Cassandra 5.0’s key features Storage–Attached Indexes (SAI)Storage–Attached Indexes bring a modern, storage-integrated approach to secondary indexing in Apache Cassandra, resolving many of the scalability and maintenance challenges associated with earlier index implementations. Legacy Secondary Indexes (2i) and SASI remain available, but SAI offers a more robust and predictable indexing model for a broad range of production workloads.

SAI operates per-SSTable, allowing queries to be indexed locally versus the cluster-wide coordination required of other strategies. This model supports diverse CQL data types, enables efficient numeric and text range filters, and provides more consistent performance characteristics than 2i or SASI. The same storage-attached foundation is also used for Cassandra 5’s vector indexing mechanism, allowing ANN search to operate within the same storage and query framework.

SAI supports combining filters across multiple indexed columns and works seamlessly with token-aware routing to reduce unnecessary coordinator work. Public evaluations and community testing have shown faster index builds, more predictable read paths, and improved disk utilization compared with previous index formats.

Operationally, SAI functions as part of the storage engine itself: indexes are defined using standard CQL statements and are maintained automatically during flush and compaction, with no cluster-wide rebuilds required. This provides more flexible query options and can simplify application designs that previously relied on manual denormalization or external indexing systems.

Native Vector Search capabilitiesApache Cassandra 5.0 introduces native support for high-dimensional vector embeddings through the new vector data type. Embeddings represent semantic information in numerical form, enabling similarity search to be performed directly within the database. The vector type is integrated with the database’s storage-attached index architecture, which uses HNSW graphs to efficiently support ANN search across cosine, Euclidean, and dot-product similarity metrics.

With vector search implemented at the storage layer, applications involving semantic matching, content discovery, and retrieval-oriented workflows while maintaining the system’s established scalability and fault-tolerance characteristics are supported.

After upgrading to 5.0, existing schemas can add vector columns and store embeddings through standard write operations. For example:

UPDATE products SET embedding = [0.1, 0.2, 0.3, 0.4, 0.5] WHERE id = <id>;

To create a new table with a vector type column:

CREATE TABLE items ( product_id UUID PRIMARY KEY, embedding VECTOR<FLOAT, 768> // 768 denotes dimensionality );

Because vector indexes are attached to SSTables, they participate automatically in the compaction and repair processes and do not require an external indexing system. ANN queries can be combined with regular CQL filters, allowing similarity searches and metadata conditions to be evaluated within a unified distributed query workflow. This brings vector retrieval into Apache Cassandra’s native consistency, replication, and storage model.

Unified Compaction Strategy (UCS)Unified Compaction Strategy in Apache Cassandra 5 included a density-aware approach to organizing SSTables that blends the strengths of Leveled Compaction Strategy (LCS) and Size Tiered Compaction Strategy (STCS). UCS aims to provide the predictable read amplification associated with LCS and the write efficiency of STCS, without many of the workload-specific drawbacks that previously made compaction selection difficult. Choosing an unsuitable compaction strategy in earlier releases could lead to operational complexity and long-term performance issues, which UCS is designed to mitigate.

UCS exposes a set of tunable parameters like density thresholds and per-level scaling that let operators adjust compaction behavior toward read-heavy, write-heavy, or time-series patterns. This flexibility also helps smooth the transition from existing strategies, as UCS can adopt and improve the current SSTable layout without requiring a full rewrite in most cases. The introduction of compaction shards further increases parallelism and reduces the impact of large compactions on cluster performance.

Although LCS and STCS remain available (and while STCS remains the default strategy in 5.0, UCS is the default strategy on newly deployed NetApp Instaclustr’s managed Apache Cassandra 5 clusters), UCS supports a broader range of workloads, reduces the operational burden of compaction tuning, and aligns well with other storage engine improvements in Apache Cassandra 5 such as trie-based SSTables and Storage-Attached Indexes.

Trie Memtables and Trie-Indexed SSTablesTrie Memtables and Trie-indexed SSTables (Big Trie-Index, BTI) are significant storage engine enhancements released in Apache Cassandra 5. They are designed to reduce memory overhead, improve lookup performance, and increase flush efficiency. A trie data structure stores keys by shared prefixes instead of repeatedly storing full keys, which lowers object count and improves CPU cache locality compared with the legacy skip-list memtable structure. These benefits are particularly visible in high-ingestion, IoT, and time-series workloads.

Skip-list memtables store full keys for every entry, which can lead to large heap usage and increased garbage collection activity under heavy write loads. Trie Memtables substantially reduce this overhead by compacting key storage and avoiding pointer-heavy layouts. On disk, the BTI SSTable format replaces the older BIG index with a trie-based partition index that removes redundant key material and reduces the number of key comparisons needed during partition lookups.

Using Trie memtables requires enabling both the trie-based memtable implementation and the BTI SSTable format. Existing BIG SSTables are converted to BTI through normal compaction or by rebuilding data. On NetApp Instaclustr’s managed Apache Cassandra clusters Trie Memtables and BTI are enabled by default, but when upgrading major versions to 5.0, data must be converted from BIG to BTI first to utilize Trie structures.

Other new features Mathematical CQL functionsApache Cassandra 5.0 added a rich set of math functions allowing developers to perform computations directly within queries. This reduces data transfer overhead and reduces client-side post-processing, among many other benefits. From fundamental functions like ABS(), ROUND(), or SQRT() to more complex operations like SIN(), COS(), TAN(), these math functions are extensible to a multitude of domains from financial data, scientific measurements or spatial data.

Dynamic Data MaskingDynamic Data Masking (DDM) is a new feature to obscure sensitive

column-level data at query time or permanently attach the

functionality to a column so that the data always returns

obfuscated. Stored data values are not altered in this process, and

administrators can control access through role-based access control

(RBAC) to ensure only those with access can see the data while also

tuning the visibility of the obscured data. This feature helps with

adherence to data privacy regulations such as GDPR, HIPAA, and PCI

DSS without needing external redaction systems.

Apache Cassandra 5.0 packs a punch with game changing features that meet the needs of modern workloads and applications. Features like vector search capabilities and Storage Attached Indexes stand out as they will inevitably shape how data can be leveraged within the same database while maintaining speed, scale, and resilience.

When you deploy a managed cluster on NetApp Instaclustr’s Managed Platform, you get the benefits of all these amazing features without worrying about configuration and maintenance.

Ready to experience the power of Apache Cassandra 5.0 for yourself? Try it free for 30 days today!

The post Exploring the key features of Cassandra® 5.0 appeared first on Instaclustr.

Instaclustr product update: December 2025

Instaclustr product update: December 2025Here’s a roundup of the latest features and updates that we’ve recently released.

If you have any particular feature requests or enhancement ideas that you would like to see, please get in touch with us.

Major announcements OpenSearch®AI Search for OpenSearch®: Unlocking next-generation search

AI Search for OpenSearch, which is now available in Public Preview on the NetApp Instaclustr Managed Platform, is designed to bring semantic, hybrid, and multimodal search capabilities to OpenSearch deployments—turning them into an end-to-end AI-powered search solution within minutes. With built-in ML models, vector indexing, and streamlined ingestion pipelines, next-generation search can be enabled in minutes without adding operational complexity. This feature powers smarter, more relevant discovery experiences backed by AI—securely deployed across any cloud or on-premises environment.

ClickHouse®

FSx for NetApp ONTAP and Managed ClickHouse® integration is now

available

We’re excited to announce that NetApp has introduced seamless

integration between Amazon FSx for NetApp ONTAP and Instaclustr

Managed ClickHouse, to enable customers to build a truly hybrid

lakehouse architecture on AWS. This integration is designed to

deliver lightning-fast analytics without the need for complex data

movement, while leveraging FSx for ONTAP’s unified file and object

storage, tiered performance, and cost optimization. Customers can

now run zero-copy lakehouse analytics with ClickHouse directly on

FSx for ONTAP data—to simplify operations, accelerate

time-to-insight, and reduce total cost of ownership.

Instaclustr for PostgreSQL® on Amazon FSx for ONTAP: A new

era

We’re excited to announce the public preview of Instaclustr Managed

PostgreSQL integrated with Amazon FSx for NetApp ONTAP—combining

enterprise-grade storage with world-class open source database

management. This integration is designed to deliver higher IOPS,

lower latency, and advanced data management without increasing

instance size or adding costly hardware. Customers can now run

PostgreSQL clusters backed by FSx for ONTAP storage, leveraging

on-disk compression for cost savings and paving the way for

ONTAP-powered features, such as instant snapshot backups, instant

restores, and fast forking. These ONTAP-enabled features are

planned to unlock huge operational benefits and will be launched

with our GA release.

- Released Apache Cassandra 5.0.5 into general availability on the NetApp Instaclustr Managed Platform.

- Transitioned Apache Cassandra v4.1.8 to CLOSED lifecycle state; scheduled to reach End of Life (EOL) on December 20, 2025.

- Kafka on Azure now supports v5 generation nodes, available in General Availability.

- Instaclustr Managed Apache ZooKeeper has moved from General Availability to closed status.

- Kafka and Kafka Connect 3.1.2 and 3.5.1 are retired; 3.6.2, 3.7.1, 3.8.1 are in legacy support. Next set of lifecycle state changes for Kafka and Kafka Connect in end March 2026 will see all supported versions 3.8.1 and below marked End of Life.

- Karapace Rest Proxy and Schema Registry 3.15.0 are closed. Customers are advised to move to version 5.x.

- Kafka Rest Proxy 5.0.0 and Kafka Schema Registry 5.0.0, 5.0.4 have been moved to end of life. Affected customers have been contacted by Support to schedule a migration to a supported version as soon as possible.

- ClickHouse 25.3.6 has been added to our managed platform in General Availability.

- Kafka Table Engine integration with ClickHouse has added support to enable real-time data ingestion, streamline streaming analytics, and accelerate insights.

- New ClickHouse node sizes, powered by AWS m7g, r7i, and r7g instances, are now in Limited Availability for cluster creation.

- Cadence is now available to be provisioned with Cassandra 5.x, designed to deliver improved performance, enhanced scalability, and stronger security for mission-critical workflows.

- OpenSearch 2.19.3 and 3.2.0 have been released to General Availability.

- PostgreSQL AWS PrivateLink support has been added, enabling connectivity between VPCs using AWS PrivateLink.

- PostgreSQL version 18.0 has now been released to General Availability, alongside PostgreSQL version 16.10, 17.6.

- Added new PostgreSQL metrics for connect states and wait event types.

- PostgreSQL Load Balancer add-on is now available, providing a unified endpoint for cluster access, simplifying failover handling, and ensuring node health through regular checks.

- We’re working on enabling multi-datacenter (multi-DC) cluster provisioning via API and console, designed to make it easier to deploy clusters across regions with secure networking and reduced manual steps.

- We’re working on adding Kafka Tiered Storage for clusters running in GCP— designed to bring affordable, scalable retention, and instant access to historical data, to ensure flexibility and performance across clouds for enterprise Kafka users.

- We’re planning to extend our Managed ClickHouse to allow it to work with on-prem deployments.

- Following the success of our public preview, we’re preparing to launch PostgreSQL integrated with FSx for NetApp ONTAP (FSxN) into General Availability. This enhancement is designed to combine enterprise-grade PostgreSQL with FSxN’s scalable, cost-efficient storage, enabling customers to optimize infrastructure costs while improving performance and flexibility.

- As part of our ongoing advancements in AI for OpenSearch, we are planning to enable adding GPU nodes into OpenSearch clusters, aiming to enhance the performance and efficiency of machine learning and AI workloads.

- Self-service Tags Management feature—allowing users to add, edit, or delete tags for their clusters directly through the Instaclustr console, APIs, or Terraform provider for RIYOA deployments.

- Cadence Workflow, the open source orchestration engine created by Uber, has officially joined the Cloud Native Computing Foundation (CNCF) as a Sandbox project. This milestone ensures transparent governance, community-driven innovation, and a sustainable future for one of the most trusted workflow technologies in modern microservices and agentic AI architectures. Uber donates Cadence Workflow to CNCF: The next big leap for the open source project—read the full story and discover what’s next for Cadence.

- Upgrading ClickHouse® isn’t just about new features—it’s essential for security, performance, and long-term stability. In ClickHouse upgrade: Why staying updated matters, you’ll learn why skipping upgrades can lead to technical debt, missed optimizations, and security risks. Then, explore A guide to ClickHouse® upgrades and best practices for practical strategies, including when to choose LTS releases for mission-critical workloads and when stable releases make sense for fast-moving environments.

- Our latest blog, AI Search for OpenSearch®: Unlocking next-generation search, explains how this new solution enables smarter discovery experiences using built-in ML models, vector embeddings, and advanced search techniques—all fully managed on the NetApp Instaclustr Platform. Ready to explore the future of search? Read the full article and see how AI can transform your OpenSearch deployments.

If you have any questions or need further assistance with these enhancements to the Instaclustr Managed Platform, please contact us.

SAFE HARBOR STATEMENT: Any unreleased services or features referenced in this blog are not currently available and may not be made generally available on time or at all, as may be determined in NetApp’s sole discretion. Any such referenced services or features do not represent promises to deliver, commitments, or obligations of NetApp and may not be incorporated into any contract. Customers should make their purchase decisions based upon services and features that are currently generally available.

The post Instaclustr product update: December 2025 appeared first on Instaclustr.

Freezing streaming data into Apache Iceberg™—Part 1: Using Apache Kafka®Connect Iceberg Sink Connector

IntroductionEver since the first distributed system—i.e. 2 or more computers networked together (in 1969)—there has been the problem of distributed data consistency: How can you ensure that data from one computer is available and consistent with the second (and more) computers? This problem can be uni-directional (one computer is considered the source of truth, others are just copies), or bi-directional (data must be synchronized in both directions across multiple computers).

Some approaches to this problem I’ve come across in the last 8 years include Kafka Connect (for elegantly solving the heterogeneous many-to-many integration problem by streaming data from source systems to Kafka and from Kafka to sink systems, some earlier blogs on Apache Camel Kafka Connectors and a blog series on zero-code data pipelines), MirrorMaker2 (MM2, for replicating Kafka clusters, a 2 part blog series), and Debezium (Change Data Capture/CDC, for capturing changes from databases as streams and making them available in downstream systems, e.g. for Apache Cassandra and PostgreSQL)—MM2 and Debezium are actually both built on Kafka Connect.

Recently, some “sink” systems have been taking over responsibility for streaming data from Kafka into themselves, e.g. OpenSearch pull-based ingestion (c.f. OpenSearch Sink Connector), and the ClickHouse Kafka Table Engine (c.f. ClickHouse Sink Connector). These “pull-based” approaches are potentially easier to configure and don’t require running a separate Kafka Connect cluster and sink connectors, but some downsides may be that they are not as reliable or independently scalable, and you will need to carefully monitor and scale them to ensure they perform adequately.

And then there’s “zero-copy” approaches—these rely on the well-known computer science trick of sharing a single copy of data using references (or pointers), rather than duplicating the data. This idea has been around for almost as long as computers, and is still widely applicable, as we’ll see in part 2 of the blog.

The distributed data use case we’re going to explore in this 2-part blog series is streaming Apache Kafka data into Apache Iceberg, or “Freezing streaming Apache Kafka data into an (Apache) Iceberg”! In part 1 we’ll introduce Apache Iceberg and look at the first approach for “freezing” streaming data using the Kafka Connect Iceberg Sink Connector.

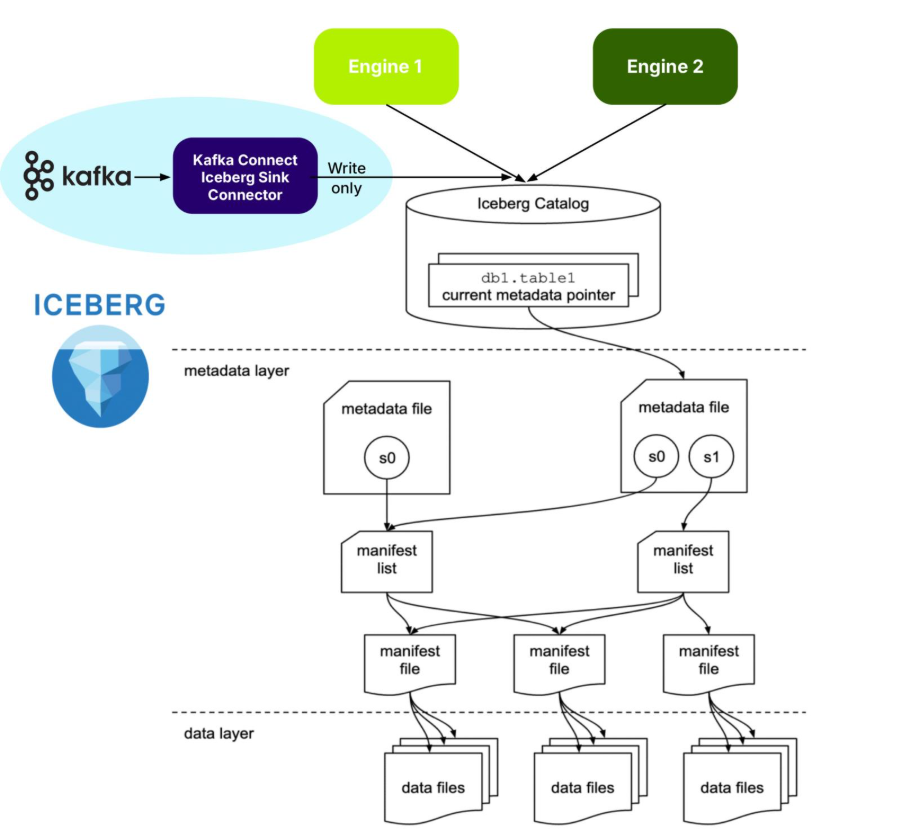

What is Apache Iceberg?Apache Iceberg is an open source specification open table format optimized for column-oriented workloads, supporting huge analytic datasets. It supports multiple different concurrent engines that can insert and query table data using SQL—and Iceberg is organized like, well, an iceberg!

The tip of the Iceberg is the Catalog. An Iceberg Catalog acts as a central metadata repository, tracking the current state of Iceberg tables, including their names, schemas, and metadata file locations. It serves as the “single source of truth” for a data Lakehouse, enabling query engines to find the correct metadata file for a table to ensure consistent and atomic read/write operations.

Just under the water, the next layer is the metadata layer. The Iceberg metadata layer tracks the structure and content of data tables in a data lake, enabling features like efficient query planning, versioning, and schema evolution. It does this by maintaining a layered structure of metadata files, manifest lists, and manifest files that store information about table schemas, partitions, and data files, allowing query engines to prune unnecessary files and perform operations atomically.

The data layer is at the bottom. The Iceberg data layer is the storage component where the actual data files are stored. It supports different storage backends, including cloud-based object storage like Amazon S3 or Google Cloud Storage, or HDFS. It uses file formats like Parquet or Avro. Its main purpose is to work in conjunction with Iceberg’s metadata layer to manage table snapshots and provide a more reliable and performant table format for data lakes, bringing data warehouse features to large datasets.

As shown in the above diagram, Iceberg supports multiple different engines, including Apache Spark and ClickHouse. Engines provide the “database” features you would expect, including:

- Data Management

- ACID Transactions

- Query Planning and Optimization

- Schema Evolution

- And more!

I’ve recently been reading an excellent book on Apache Iceberg (“Apache Iceberg: The Definitive Guide”), which explains the philosophy, architecture and design, including operation, of Iceberg. For example, it says that it’s best practice to treat data lake storage as immutable—data should only be added to a Data Lake, not deleted. So, in theory at least, writing infinite, immutable Kafka streams to Iceberg should be straightforward!

But because it’s a complex distributed system (which looks like a database from above water but is really a bunch of files below water!), there is some operational complexity. For example, it handles change and consistency by creating new snapshots for every modification, enabling time travel, isolating readers from writes, and supporting optimistic concurrency control for multiple writers. But you need to manage snapshots (e.g. expiring old snapshots). And chapter 4 (performance optimisation) explains that you may need to worry about compaction (reducing too many small files), partitioning approaches (which can impact read performance), and handling row-level updates. The first two issues may be relevant for Kafka, but probably not the last one. So, it looks like it’s good fit for the streaming Kafka use cases, but we may need to watch out for Iceberg management issues.

“Freezing” streaming data with the Kafka Iceberg Sink ConnectorBut Apache Iceberg is “frozen”—what’s the connection to fast-moving streaming data? You certainly don’t want to collide with an iceberg from your speedy streaming “ship”—but you may want to freeze your streaming data for long-term analytical queries in the future. How can you do that without sinking? Actually, a “sink” is the first answer: A Kafka Connect Iceberg Sink Connector is the most common way of “freezing” your streaming data in Iceberg!

Kafka Connect is the standard framework provided by Apache Kafka to move data from multiple heterogeneous source systems to multiple heterogeneous sink systems, using:

- A Kafka cluster

- A Kafka Connect cluster (running connectors)

- Kafka Connect source connectors

- Kafka topics and

- Kafka Connect Sink Connectors

That is, a highly decoupled approach. It provides real-time data movement with high scalability, reliability, error handling and simple transformations.

Here’s the Kafka Connect Iceberg Sink Connector official documentation.

It appears to be reasonably complicated to configure this sink connector; you will need to know something about Iceberg. For example, what is a “control topic”? It’s apparently used to coordinate commits for exactly-once semantics (EOS).

The connector supports fan-out (writing to multiple Iceberg tables from one topic), fan-in (writing to one Iceberg table from multiple topics), static and dynamic routing, and filtering.

In common with many technologies that you may want to use as Kafka Connect sinks, they may not all have good support for Kafka metadata. The KafkaMetadata Transform (which injects topic, partition, offset and timestamp properties) is only experimental at present.

How are Iceberg tables created with the correct metadata? If you have JSON record values, then schemas are inferred by default (but may not be correct or optimal). Alternatively, explicit schemas can be included in-line or referenced from a Kafka Schema Registry (e.g. Karapace), and, as an added bonus, schema evolution is supported. Also note that Iceberg tables may have to be manually created prior to use if your Catalog doesn’t support table auto-creation.

From what I understood about Iceberg, to use it (e.g. for writes), you need support from an engine (e.g. to add raw data to the Iceberg warehouse, create the metadata files, and update the catalog). How does this work for Kafka Connect? From this blog I discovered that the Kafka Connect Iceberg Sink connector is functioning as an Iceberg engine for writes, so there really is an engine, but it’s built into the connector.

As is the case with all Kafka Connect Sink Connectors, records are available immediately they are written to Kafka topics by Kafka producers and Kafka Connect Source Connectors, i.e. records in active segments can be copied immediately to sink systems. But is the Iceberg Sink Connector real-time? Not really! The default time to write to Iceberg is every 5 minutes (iceberg.control.commit.interval-ms) to prevent multiplication of small files—something that Iceberg(s) doesn’t/don’t like (“melting”?). In practice, it’s because every data file must be tracked in the metadata layer, which impacts performance in many ways—proliferation of small files is typically addressed by optimization and compaction (e.g. Apache Spark supports Iceberg management, including these operations).

So, unlike most Kafka Connect sink connectors, which write as quickly as possible, there will be lag before records appear in Iceberg tables (“time to freeze” perhaps)!

The systems are separate (Kafka and Iceberg are independent), records are copied to Iceberg, and that’s it! This is a clean separation of concerns and ownership. Kafka owns the source data (with Kafka controlling data lifecycles, including record expiry), Kafka Connect Iceberg Sink Connector performs the reading from Kafka and writing to Iceberg, and is independently scalable to Kafka. Kafka doesn’t handle any of the Iceberg management. Once the data has landed in Iceberg, Kafka has no further visibility or interest in it. And the pipeline is purely one way, write only – reads or deletes are not supported.

Here’s a summary of this approach to freezing streams:

- Kafka Connect Iceberg Sink Connector shares all the benefits of the Kafka Connect framework, including scalability, reliability, error handling, routing, and transformations.

- At least, JSON values are required, ideally full schemas and referenced in Karapace—but not all schemas are guaranteed to work.

- Kafka Connect doesn’t “manage” Iceberg (e.g. automatically aggregate small files, remove snapshots, etc.)

- You may have to tune the commit interval – 5 minutes is the default.

- But it does have a built-in engine that supports writing to Iceberg.

- You may need to use an external tool (e.g. Apache Spark) for Iceberg management procedures.

- It’s write-only to Iceberg. Reads or deletes are not supported

But what’s the best thing about the Kafka Connect Iceberg Sink Connector? It’s available now (as part of the Apache Iceberg build) and works on the NetApp Instaclustr Kafka Connect platform as a “bring your own connector” (instructions here).

In part 2, we’ll look at Kafka Tiered Storage and Iceberg Topics!

The post Freezing streaming data into Apache Iceberg™—Part 1: Using Apache Kafka®Connect Iceberg Sink Connector appeared first on Instaclustr.

Stay ahead with Apache Cassandra®: 2025 CEP highlights

Apache Cassandra® committers are working hard, building new features to help you more seamlessly ease operational challenges of a distributed database. Let’s dive into some recently approved CEPs and explain how these upcoming features will improve your workflow and efficiency.

What is a CEP?CEP stands for Cassandra Enhancement Proposal. They are the process for outlining, discussing, and gathering endorsements for a new feature in Cassandra. They’re more than a feature request; those who put forth a CEP have intent to build the feature, and the proposal encourages a high amount of collaboration with the Cassandra contributors.

The CEPs discussed here were recently approved for implementation or have had significant progress in their implementation. As with all open-source development, inclusion in a future release is contingent upon successful implementation, community consensus, testing, and approval by project committers.

CEP-42: Constraints frameworkWith collaboration from NetApp Instaclustr, CEP-42, and subsequent iterations, delivers schema level constraints giving Cassandra users and operators more control over their data. Adding constraints on the schema level means that data can be validated at write time and send the appropriate error when data is invalid.

Constraints are defined in-line or as a separate definition. The inline style allows for only one constraint while a definition allows users to define multiple constraints with different expressions.

The scope of this CEP-42 initially supported a few constraints that covered the majority of cases, but in follow up efforts the expanded list of support includes scalar (>, <, >=, <=), LENGTH(), OCTET_LENGTH(), NOT NULL, JSON(), REGEX(). A user is also able to define their own constraints if they implement it and put them on Cassandra’s class path.

A simple example of an in-line constraint:

CREATE TABLE users (

username text PRIMARY KEY,

age int CHECK age >= 0 and age < 120

);

Constraints are not supported for UDTs (User-Defined Types) nor collections (except for using NOT NULL for frozen collections).

Enabling constraints closer to the data is a subtle but mighty way for operators to ensure that data goes into the database correctly. By defining rules just once, application code is simplified, more robust, and prevents validation from being bypassed. Those who have worked with MySQL, Postgres, or MongoDB will enjoy this addition to Cassandra.

CEP-51: Support “Include” Semantics for cassandra.yamlThe cassandra.yaml file holds important settings for storage,

memory, replication, compaction, and more. It’s no surprise that

the average size of the file around 1,000 lines (though, yes—most

are comments). CEP-51 enables splitting the cassandra.yaml

configuration into multiple files using includes

semantics. From the outside, this feels like a small change, but

the implications are huge if a user chooses to opt-in.

In general, the size of the configuration file makes it difficult to manage and coordinate changes. It’s often the case that multiple teams manage various aspects of the single file. In addition, cassandra.yaml permissions are readable for those with access to this file, meaning private information like credentials are comingled with all other settings. There is risk from an operational and security standpoint.

Enabling the new semantics and therefore modularity for the configuration file eases management, deployment, complexity around environment-specific settings, and security in one shot. The configuration file follows the principle of least privilege once the cassandra.yaml is broken up into smaller, well-defined files; sensitive configuration settings are separated out from general settings with fine-grained access for the individual files. With the feature enabled, different development teams are better equipped to deploy safely and independently.

If you’ve deployed your Cassandra cluster on the NetApp Instaclustr platform, the cassandra.yaml file is already configured and managed for you. We pride ourselves on making it easy for you to get up and running fast.

CEP-52: Schema annotations for Apache CassandraWith extensive review by the NetApp Instaclustr team and Stefan Miklosovic, CEP-52 introduces schema annotations in CQL allowing in-line comments and labels of schema elements such as keyspaces, tables, columns, and User Defined Types (UDT). Users can easily define and alter comments and labels on these elements. They can be copied over when desired using CREATE TABLE LIKE syntax. Comments are stored as plain text while labels are stored as structured metadata.

Comments and labels serve different annotation purposes: Comments document what a schema object is for, whereas labels describe how sensitive or controlled it is meant to be. For example, labels can be used to identify columns as “PII” or “confidential”, while the comment on that column explains usage, e.g. “Last login timestamp.”

Users can query these annotations. CEP-52 defines two new read-only tables (system_views.schema_comments and system_views.schema_security_labels) to store comments and security labels so objects with comments can be returned as a list or a user/machine process can query for specific labels, beneficial for auditing and classification. Note that adding security labels are descriptive metadata and do not enforce access control to the data.

CEP-53: Cassandra rolling restarts via SidecarSidecar is an auxiliary component in the Cassandra ecosystem that exposes cluster management and streaming capabilities through APIs. Introducing rolling restarts through Sidecar, this feature is designed to provide operators with more efficient and safer restarts without cluster-wide downtime. More specifically, operators can monitor, pause, resume, and abort restarts all through an API with configurable options if restarts fail.

Rolling restarts brings operators a step closer to cluster-wide operations and lifecycle management via Sidecar. Operators will be able to configure the number of nodes to restart concurrently with minimal risk as this CEP unleashes clear states as a node progresses through a restart. Accounting for a variety of edge cases, an operator can feel assured that, for example, a non-functioning sidecar won’t derail operations.

The current process for restarting a node is a multi-step, manual process, which does not scale for large cluster sizes (and is also tedious for small clusters). Restarting clusters previously lacked a streamlined approach as each node needed to be restarted one at a time, making the process time-intensive and error-prone.

Though Sidecar is still considered WIP, it’s got big plans to improve operating large clusters!

The NetApp Instaclustr Platform, in conjunction with our expert TechOps team already orchestrates these laborious tasks for our Cassandra customers with a high level of care to ensure their cluster stays online. Restarting or upgrading your Cassandra nodes is a huge pain-point for operators, but it doesn’t have to be when using our managed platform (with round-the-clock support!)

CEP-54: Zstd with dictionary SSTable compressionCEP-54, with NetApp Instaclustr’s collaboration, aims to add support Zstd with dictionary compression for SSTables. Zstd, or Zstandard, is a fast, lossless data compression algorithm that boasts impressive ratio and speed and has been supported in Cassandra since 4.0. Certain workloads can benefit from significantly faster read/write performance, reduced storage footprint, and increased storage device lifetime when using dictionary compression.

At a high level, operators choose a table they want to compress with a dictionary. A dictionary must be trained first on a small amount of already present data (recommended no more than 10MiB). The result of a training is a dictionary, which is stored cluster-wide for all other nodes to use, and this dictionary is used for all subsequent writes of SSTables to a disk.

Workloads with structured data of similar rows benefit most from Zstd with dictionary compression. Some examples of ideal workloads include event logs, telemetry data, metadata tables with templated messages. Think: repeated row data. If the table data is too unstructured or random, this feature likely won’t be optimal for dictionary compression, however plain Zstd will still be an excellent option.

New SSTables with dictionaries are readable across nodes and can stream, repair, and backup. Existing tables are unaffected if dictionary compression is not enabled. Too many unique dictionaries hurt decompression; use minimal dictionaries (recommended dictionary size is about 100KiB and one dictionary per table) and only adopt new ones when they’re noticeably better.

CEP-55: Generated role namesCEP-55 adds support to create users/roles without supplying a name, simplifying

user management, especially when generating users and roles in bulk. With an example syntax, CREATE GENERATED ROLE WITH GENERATED PASSWORD, new keys are placed in a newly introduced configuration section in cassandra.yaml under “role_name_policy.”

Stefan Miklosovic, our Cassandra engineer at NetApp Instaclustr, created this CEP as a logical follow up to CEP-24 (password validation/generation), which he authored as well. These quality-of-life improvements let operators spend less time doing trivial tasks with high-risk potential and more time on truly complex matters.

Manual name selection seems trivial until a hundred role names need to be generated; now there is a security risk if the new usernames—or worse passwords—are easily guessable. With CEP-55, the generated role name will be UUID-like, with optional prefix/suffix and size hints, however a pluggable policy is available to generate and validate names as well. This is an opt-in feature with no effect to the existing method of generating role names.

The future of Apache Cassandra is brightThese Cassandra Enhancement Proposals demonstrate a strong commitment to making Apache Cassandra more powerful, secure, and easier to operate. By staying on top of these updates, we ensure our managed platform seamlessly supports future releases that accelerate your business needs.

At NetApp Instaclustr, our expert TechOps team already orchestrates laborious tasks like restarts and upgrades for our Apache Cassandra customers, ensuring their clusters stay online. Our platform handles the complexity so you can get up and running fast.

Learn more about our fully managed and hosted Apache Cassandra offering and try it for free today!

The post Stay ahead with Apache Cassandra®: 2025 CEP highlights appeared first on Instaclustr.

Vector search benchmarking: Embeddings, insertion, and searching documents with ClickHouse® and Apache Cassandra®

Welcome back to our series on vector search benchmarking. In part 1, we dove into setting up a benchmarking project and explored how to implement vector search in PostgreSQL from the example code in GitHub. We saw how a hands-on project with students from Northeastern University provided a real-world testing ground for Retrieval-Augmented Generation (RAG) pipelines.

Now, we’re continuing our journey by exploring two more powerful open source technologies: ClickHouse and Apache Cassandra. Both handle vector data differently and understanding their methods is key to effective vector search benchmarking. Using the same student project as our guide, this post will examine the code for embedding, inserting, and retrieving data to see how these technologies stack up.

Let’s get started.

Vector search benchmarking with ClickHouseClickHouse is a column-oriented database management system known for its incredible speed in analytical queries. It’s no surprise that it has also embraced vector search. Let’s see how the student project team implemented and benchmarked the core components.

Step 1: Embedding and inserting data

scripts/vectorize_and_upload.py

This is the file that handles Step 1 of the pipeline for

ClickHouse. Embeddings in this file

(scripts/vectorize_and_upload.py) are used as vector

representations of Guardian news articles for the purpose of

storing them in a database and performing semantic search. Here’s

how embeddings are handled step-by-step (the steps look similar to

PostgreSQL).

First up, is the generation of embeddings. The same

SentenceTransformer model used in part 1

(all-MiniLM-L6-v2) is loaded in the class constructor.

In the method generate_embeddings(self, articles), for

each article:

- The article’s title and body are concatenated into a text string.

- The model generates an embedding vector

(

self.model.encode(text_for_embedding)), which is a numerical representation of the article’s semantic content. - The embedding is added to the article’s dictionary under the

key

embedding.

Then the embeddings are stored in ClickHouse as follows.

- The database table

guardian_articlesis created with an embeddingArray(Float64) NOT NULLcolumn specifically to store these vectors. - In

upload_to_clickhouse_debug(self, articles_with_embeddings), the script inserts articles into ClickHouse, including the embedding vector as part of each row.

services/clickhouse/clickhouse_dao.py

The steps to search are the same as for PostgreSQL in part 1.

Here’s part of the related_articles method for

ClickHouse:

def related_articles(self, query: str, limit: int =

5):

"""Search for similar articles using vector similarity""" ... query_embedding = self.model.encode(query).tolist() search_query = f""" SELECT url, title, body, publication_date, cosineDistance(embedding, {query_embedding}) as distance FROM guardian_articles ORDER BY distance ASC LIMIT {limit} """ ...

When searching for related articles, it encodes the query into an embedding, then performs a vector similarity search in ClickHouse using cosineDistance between stored embeddings and the query embedding, and results are ordered by similarity, returning the most relevant articles.

Vector search benchmarking with Apache CassandraNext, let’s turn our attention to Apache Cassandra. As a distributed NoSQL database, Cassandra is designed for high availability and scalability, making it an intriguing option for large-scale RAG applications.

Step 1: Embedding and inserting datascripts/pull_docs_cassandra.py

As in the above examples, embeddings in this file are used to

convert article text (body) into numerical vector

representations for storage and later retrieval in Cassandra.

For each article, the code extracts the body and

computes the embeddings:

embedding = model.encode(body) embedding_list = [float(x) for x in embedding]

model.encode(body)converts the text to aNumPyarray of 384 floats.- The array is converted to a standard Python list of floats for Cassandra storage.

Next, the embedding is stored in the vector column of the

articles table using a CQL INSERT:

insert_cql = SimpleStatement(""" INSERT INTO articles (url, title, body, publication_date, vector) VALUES (%s, %s, %s, %s, %s) IF NOT EXISTS; """) result = session.execute(insert_cql, (url, title, body, publication_date, embedding_list))

The schema for the table specifies: vector

vector<float, 384>, meaning each article has a

corresponding 384-dimensional embedding. The code also creates a

custom index for the vector column:

session.execute(""" CREATE CUSTOM INDEX IF NOT EXISTS ann_index ON articles(vector) USING 'StorageAttachedIndex'; """)

This enables efficient vector (ANN: Approximate Nearest Neighbor) search capabilities, allowing similarity queries on stored embeddings.

A key part of the setup is the schema and indexing. The

Cassandra schema in

services/cassandra/init/01-schema.cql defines the

vector column.

Being a NoSQL database, Cassandra schemas are a bit different to normal SQL databases, so it’s worth taking a closer look. This Cassandra schema is designed to support Retrieval-Augmented Generation (RAG) architectures, which combine information retrieval with generative models to answer queries using both stored data and generative AI. Here’s how the schema supports RAG:

- Keyspace and table structure

- Keyspace (

vectorembeds): Analogous to a database, this isolates all RAG-related tables and data. - Table (

articles): Stores retrievable knowledge sources (e.g., articles) for use in generation.

- Keyspace (

- Table columns

url TEXT PRIMARY KEY: Uniquely identifies each article/document, useful for referencing and deduplication.title TEXTandbody TEXT: Store the actual content and metadata, which may be retrieved and passed to the generative model during RAG.publication_date TIMESTAMP: Enables filtering or ranking based on recency.vector VECTOR<FLOAT, 384>: Stores the embedding representation of the article. The new Cassandra vector data type is documented here.

- Indexing

- Sets up an Approximate Nearest Neighbor (ANN) index using Cassandra’s Storage Attached Index.

More information about Cassandra vector support is in the documentation.

Step 2: Vector search and retrievalThe retrieval logic in

services/cassandra/cassandra_dao.py showcases the

elegance of Cassandra’s vector search capabilities.

The code to create the query embeddings and perform the query is similar to the previous examples, but the CQL query to retrieve similar documents looks like this:

query_cql = """ SELECT url, title, body, publication_date FROM articles ORDER BY vector ANN OF ? LIMIT ? """ prepared = self.client.prepare(query_cql) rows = self.client.execute(prepared, (emb, limit))What have we learned?

By exploring the code from this RAG benchmarking project we’ve seen distinct approaches to vector search. Here’s a summary of key takeaways:

- Critical steps in the process:

- Step 1: Embedding articles and inserting them into the vector databases.

- Step 2: Embedding queries and retrieving relevant articles from the database.

- Key design pattern:

- The DAO (Data Access Object) design pattern provides a clean, scalable way to support multiple databases.

- This approach could extend to other databases, such as OpenSearch, in the future.

- Additional insights:

- It’s possible to perform vector searches over the latest documents, pre-empting queries, and potentially speeding up the pipeline.

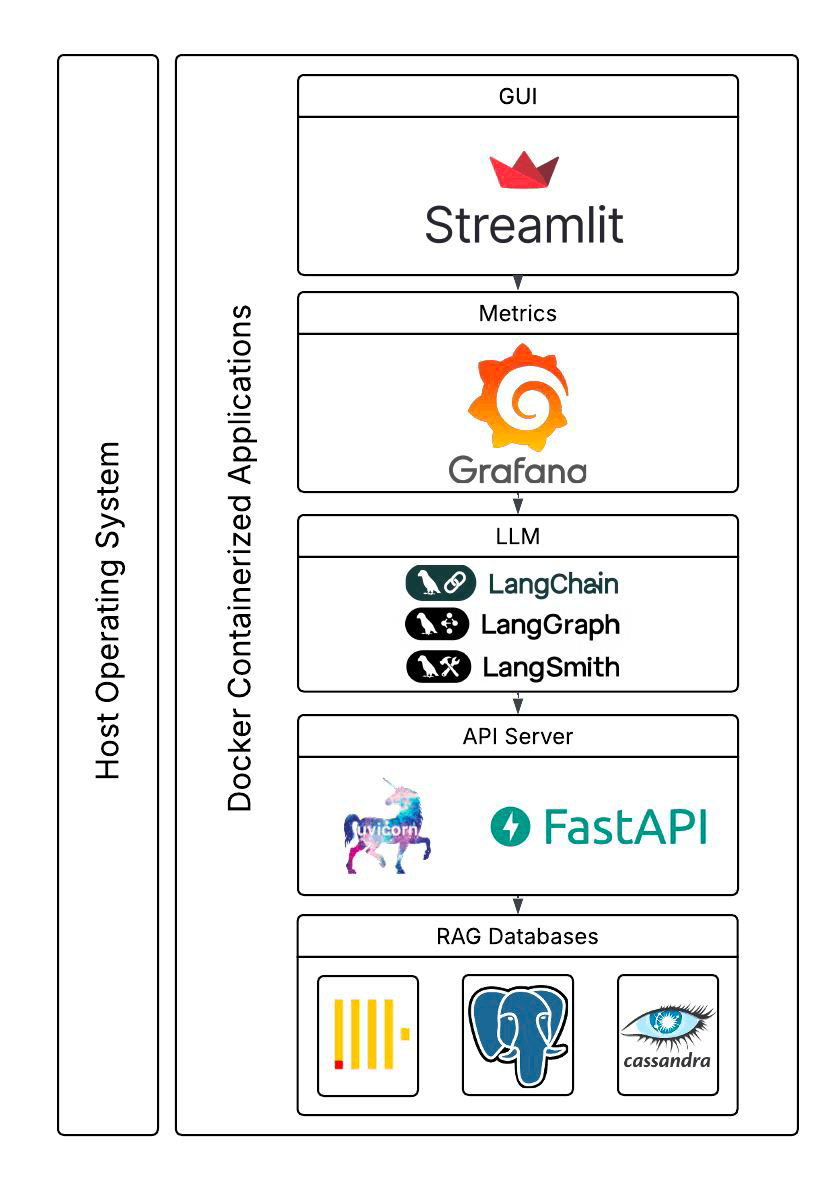

So far, we have only scratched the surface. The students built a complete benchmarking application with a GUI (using Steamlit), used multiple other interesting components (e.g. LangChain, LangGraph, FastAPI and uvicorn), Grafana and LangSmith for metrics, and Claude to use the retrieved articles to answer questions, and Docker support for the components. They also revealed some preliminary performance results! Here’s what the final system looked like (this and the previous blog focused on the bottom boxes only).

In a future article, we will examine the rest of the application code, look at the preliminary performance results the students uncovered, and discuss what they tell us about the trade-offs between these different databases.

Ready to learn more right now? We have a wealth of resources on vector search. You can explore our blogs on ClickHouse vector search and Apache Cassandra Vector Search (here, here, and here) to deepen your understanding.

The post Vector search benchmarking: Embeddings, insertion, and searching documents with ClickHouse® and Apache Cassandra® appeared first on Instaclustr.

Optimizing Cassandra Repair for Higher Node Density

This is the fourth post in my series on improving the cost efficiency of Apache Cassandra through increased node density. In the last post, we explored compaction strategies, specifically the new UnifiedCompactionStrategy (UCS) which appeared in Cassandra 5.

- Streaming Throughput

- Compaction Throughput and Strategies

- Repair (you are here)

- Query Throughput

- Garbage Collection and Memory Management

- Efficient Disk Access

- Compression Performance and Ratio

- Linearly Scaling Subsystems with CPU Core Count and Memory

Now, we’ll tackle another aspect of Cassandra operations that directly impacts how much data you can efficiently store per node: repair. Having worked with repairs across hundreds of clusters since 2012, I’ve developed strong opinions on what works and what doesn’t when you’re pushing the limits of node density.

Building easy-cass-mcp: An MCP Server for Cassandra Operations

I’ve started working on a new project that I’d like to share, easy-cass-mcp, an MCP (Model Context Protocol) server specifically designed to assist Apache Cassandra operators.

After spending over a decade optimizing Cassandra clusters in production environments, I’ve seen teams consistently struggle with how to interpret system metrics, configuration settings, schema design, and system configuration, and most importantly, how to understand how they all impact each other. While many teams have solid monitoring through JMX-based collectors, extracting and contextualizing specific operational metrics for troubleshooting or optimization can still be cumbersome. The good news is that we now have the infrastructure to make all this operational knowledge accessible through conversational AI.

easy-cass-stress Joins the Apache Cassandra Project

I’m taking a quick break from my series on Cassandra node density to share some news with the Cassandra community: easy-cass-stress has officially been donated to the Apache Software Foundation and is now part of the Apache Cassandra project ecosystem as cassandra-easy-stress.

Why This Matters

Over the past decade, I’ve worked with countless teams struggling with Cassandra performance testing and benchmarking. The reality is that stress testing distributed systems requires tools that can accurately simulate real-world workloads. Many tools make this difficult by requiring the end user to learn complex configurations and nuance. While consulting at The Last Pickle, I set out to create an easy to use tool that lets people get up and running in just a few minutes

Azure fault domains vs availability zones: Achieving zero downtime migrations

The challenges of operating production-ready enterprise systems in the cloud are ensuring applications remain up to date, secure and benefit from the latest features. This can include operating system or application version upgrades, but it is not limited to advancements in cloud provider offerings or the retirement of older ones. Recently, NetApp Instaclustr undertook a migration activity for (almost) all our Azure fault domain customers to availability zones and Basic SKU IP addresses.

Understanding Azure fault domains vs availability zones“Azure fault domain vs availability zone” reflects a critical distinction in ensuring high availability and fault tolerance. Fault domains offer physical separation within a data center, while availability zones expand on this by distributing workloads across data centers within a region. This enhances resiliency against failures, making availability zones a clear step forward.

The need for migrating from fault domains to availability zonesNetApp Instaclustr has supported Azure as a cloud provider for our Managed open source offerings since 2016. Originally this offering was distributed across fault domains to ensure high availability using “Basic SKU public IP Addresses”, but this solution had some drawbacks when performing particular types of maintenance. Once released by Azure in several regions we extended our Azure support to availability zones which have a number of benefits including more explicit placement of additional resources, and we leveraged “Standard SKU Public IP’s” as part of this deployment.

When we introduced availability zones, we encouraged customers to provision new workloads in them. We also supported migrating workloads to availability zones, but we had not pushed existing deployments to do the migration. This was initially due to the reduced number of regions that supported availability zones.

In early 2024, we were notified that Azure would be retiring support for Basic SKU public IP addresses in September 2025. Notably, no new Basic SKU public IPs would be created after March 1, 2025. For us and our customers, this had the potential to impact cluster availability and stability – as we would be unable to add nodes, and some replacement operations would fail.

Very quickly we identified that we needed to migrate all customer deployments from Basic SKU to Standard SKU public IPs. Unfortunately, this operation involves node-level downtime as we needed to stop each individual virtual machine, detach the IP address, upgrade the IP address to the new SKU, and then reattach and start the instance. For customers who are operating their applications in line with our recommendations, node-level downtime does not have an impact on overall application availability, however it can increase strain on the remaining nodes.

Given that we needed to perform this potentially disruptive maintenance by a specific date, we decided to evaluate the migration of existing customers to Azure availability zones.

Key migration consideration for Cassandra clustersAs with any migration, we were looking at performing this with zero application downtime, minimal additional infrastructure costs, and as safe as possible. For some customers, we also needed to ensure that we do not change the contact IP addresses of the deployment, as this may require application updates from their side. We quickly worked out several ways to achieve this migration, each with its own set of pros and cons.

For our Cassandra customers, our go to method for changing cluster topology is through a data center migration. This is our zero-downtime migration method that we have completed hundreds of times, and have vast experience in executing. The benefit here is that we can be extremely confident of application uptime through the entire operation and be confident in the ability to pause and reverse the migration if issues are encountered. The major drawback to a data center migration is the increased infrastructure cost during the migration period – as you effectively need to have both your source and destination data centers running simultaneously throughout the operation. The other item of note, is that you will need to update your cluster contact points to the new data center.

For clusters running other applications, or customers who are more cost conscious, we evaluated doing a “node by node” migration from Basic SKU IP addresses in fault domains, to Standard SKU IP addresses in availability zones. This does not have any short-term increased infrastructure cost, however the upgrade from Basic SKU public IP to Standard SKU is irreversible, and different types of public IPs cannot coexist within the same fault domain. Additionally, this method comes with reduced rollback abilities. Therefore, we needed to devise a plan to minimize risks for our customers and ensure a seamless migration.

Developing a zero-downtime node-by-node migration strategyTo achieve a zero-downtime “node by node” migration, we explored several options, one of which involved building tooling to migrate the instances in the cloud provider but preserve all existing configurations. The tooling automates the migration process as follows:

- Begin with stopping the first VM in the cluster. For cluster availability, ensure that only 1 VM is stopped at any time.

- Create an OS disk snapshot and verify its success, then do the same for data disks

- Ensure all snapshots are created and generate new disks from snapshots

- Create a new network interface card (NIC) and confirm its status is green

- Create a new VM and attach the disks, confirming that the new VM is up and running

- Update the private IP address and verify the change

- The public IP SKU will then be upgraded, making sure this operation is successful

- The public IP will then be reattached to the VM

- Start the VM

Even though the disks are created from snapshots of the original disks, we encountered several discrepancies in our testing, with settings between the original VM and the new VM. For instance, certain configurations, such as caching policies, did not automatically carry over, requiring manual adjustments to align with our managed standards.

Recognizing these challenges, we decided to extend our existing node replacement mechanism to streamline our migration process. This is done so that a new instance is provisioned with a new OS disk with the same IP and application data. The new node is configured by the Instaclustr Managed Platform to be the same as the original node.

The next challenge: our existing solution is built so that the replaced node was provisioned to be the exact same as the original. However, for this operation we needed the new node to be placed in an availability zone instead of the same fault domain. This required us to extend the replacement operation so that when we triggered the replacement, the new node was placed in the desired availability zone. Once this operation completed, we had a replacement tool that ensured that the new instance was correctly provisioned in the availability zone, with a Standard SKU, and without data loss.

Now that we had two very viable options, we went back to our existing Azure customers to outline the problem space, and the operations that needed to be completed. We worked with all impacted customers on the best migration path for their specific use case or application and worked out the best time to complete the migration. Where possible, we first performed the migration on any test or QA environments before moving onto production environments.

Collaborative customer migration successSome of our Cassandra customers opted to perform the migration using our data center migration path, however most customers opted for the node-by-node method. We successfully migrated the existing Azure fault domain clusters over to the Availability Zone that we were targeting, with only a very small number of clusters remaining. These clusters are operating in Azure regions which do not yet support availability zones, but we were able to successfully upgrade their public IP from Basic SKUs that are set for retirement to Standard SKUs.

No matter what provider you use, the pace of development in cloud computing can require significant effort to support ongoing maintenance and feature adoption to take advantage of new opportunities. For business-critical applications, being able to migrate to new infrastructure and leverage these opportunities while understanding the limitations and impact they have on other services is essential.

NetApp Instaclustr has a depth of experience in supporting business critical applications in the cloud. You can read more about another large-scale migration we completed The worlds Largest Apache Kafka and Apache Cassandra Migration or head over to our console for a free trial of the Instaclustr Managed Platform.

The post Azure fault domains vs availability zones: Achieving zero downtime migrations appeared first on Instaclustr.

Compaction Strategies, Performance, and Their Impact on Cassandra Node Density

This is the third post in my series on optimizing Apache Cassandra for maximum cost efficiency through increased node density. In the first post, I examined how streaming operations impact node density and laid out the groundwork for understanding why higher node density leads to significant cost savings. In the second post, I discussed how compaction throughput is critical to node density and introduced the optimizations we implemented in CASSANDRA-15452 to improve throughput on disaggregated storage like EBS.

Cassandra Compaction Throughput Performance Explained

This is the second post in my series on improving node density and lowering costs with Apache Cassandra. In the previous post, I examined how streaming performance impacts node density and operational costs. In this post, I’ll focus on compaction throughput, and a recent optimization in Cassandra 5.0.4 that significantly improves it, CASSANDRA-15452.

This post assumes some familiarity with Apache Cassandra storage engine fundamentals. The documentation has a nice section covering the storage engine if you’d like to brush up before reading this post.

How Cassandra Streaming, Performance, Node Density, and Cost are All related

This is the first post of several I have planned on optimizing Apache Cassandra for maximum cost efficiency. I’ve spent over a decade working with Cassandra and have spent tens of thousands of hours data modeling, fixing issues, writing tools for it, and analyzing it’s performance. I’ve always been fascinated by database performance tuning, even before Cassandra.

A decade ago I filed one of my first issues with the project, where I laid out my target goal of 20TB of data per node. This wasn’t possible for most workloads at the time, but I’ve kept this target in my sights.

Cassandra 5 Released! What's New and How to Try it

Apache Cassandra 5.0 has officially landed! This highly anticipated release brings a range of new features and performance improvements to one of the most popular NoSQL databases in the world. Having recently hosted a webinar covering the major features of Cassandra 5.0, I’m excited to give a brief overview of the key updates and show you how to easily get hands-on with the latest release using easy-cass-lab.

You can grab the latest release on the Cassandra download page.

easy-cass-lab v5 released

I’ve got some fun news to start the week off for users of easy-cass-lab: I’ve just released version 5. There are a number of nice improvements and bug fixes in here that should make it more enjoyable, more useful, and lay groundwork for some future enhancements.

- When the cluster starts, we wait for the storage service to

reach NORMAL state, then move to the next node. This is in contrast

to the previous behavior where we waited for 2 minutes after

starting a node. This queries JMX directly using Swiss Java Knife

and is more reliable than the 2-minute method. Please see

packer/bin-cassandra/wait-for-up-normalto read through the implementation. - Trunk now works correctly. Unfortunately, AxonOps doesn’t support trunk (5.1) yet, and using the agent was causing a startup error. You can test trunk out, but for now the AxonOps integration is disabled.

- Added a new repl mode. This saves keystrokes and provides some

auto-complete functionality and keeps SSH connections open. If

you’re going to do a lot of work with ECL this will help you be a

little more efficient. You can try this out with

ecl repl. - Power user feature: Initial support for profiles in AWS regions

other than

us-west-2. We only provide AMIs forus-west-2, but you can now set up a profile in an alternate region, and build the required AMIs usingeasy-cass-lab build-image. This feature is still under development and requires using aneasy-cass-labbuild from source. Credit to Jordan West for contributing this work. - Power user feature: Support for multiple profiles. Setting the

EASY_CASS_LAB_PROFILEenvironment variable allows you to configure alternate profiles. This is handy if you want to use multiple regions or have multiple organizations. - The project now uses Kotlin instead of Groovy for Gradle configuration.

- Updated Gradle to 8.9.

- When using the list command, don’t show the alias “current”.

- Project cleanup, remove old unused pssh, cassandra build, and async profiler subprojects.

The release has been released to the project’s GitHub page and to homebrew. The project is largely driven by my own consulting needs and for my training. If you’re looking to have some features prioritized please reach out, and we can discuss a consulting engagement.

easy-cass-lab updated with Cassandra 5.0 RC-1 Support

I’m excited to announce that the latest version of easy-cass-lab now supports Cassandra 5.0 RC-1, which was just made available last week! This update marks a significant milestone, providing users with the ability to test and experiment with the newest Cassandra 5.0 features in a simplified manner. This post will walk you through how to set up a cluster, SSH in, and run your first stress test.

For those new to easy-cass-lab, it’s a tool designed to streamline the setup and management of Cassandra clusters in AWS, making it accessible for both new and experienced users. Whether you’re running tests, developing new features, or just exploring Cassandra, easy-cass-lab is your go-to tool.

easy-cass-lab now available in Homebrew

I’m happy to share some exciting news for all Cassandra enthusiasts! My open source project, easy-cass-lab, is now installable via a homebrew tap. This powerful tool is designed to make testing any major version of Cassandra (or even builds that haven’t been released yet) a breeze, using AWS. A big thank-you to Jordan West who took the time to make this happen!

What is easy-cass-lab?

easy-cass-lab is a versatile testing tool for Apache Cassandra. Whether you’re dealing with the latest stable releases or experimenting with unreleased builds, easy-cass-lab provides a seamless way to test and validate your applications. With easy-cass-lab, you can ensure compatibility and performance across different Cassandra versions, making it an essential tool for developers and system administrators. easy-cass-lab is used extensively for my consulting engagements, my training program, and to evaluate performance patches destined for open source Cassandra. Here are a few examples:

Cassandra Training Signups For July and August Are Open!

I’m pleased to announce that I’ve opened training signups for Operator Excellence to the public for July and August. If you’re interested in stepping up your game as a Cassandra operator, this course is for you. Head over to the training page to find out more and sign up for the course.

Streaming My Sessions With Cassandra 5.0

As a long time participant with the Cassandra project, I’ve witnessed firsthand the evolution of this incredible database. From its early days to the present, our journey has been marked by continuous innovation, challenges, and a relentless pursuit of excellence. I’m thrilled to share that I’ll be streaming several working sessions over the next several weeks as I evaluate the latest builds and test out new features as we move toward the 5.0 release.

Streaming Cassandra Workloads and Experiments

Streaming

In the world of software engineering, especially within the realm of distributed systems, continuous learning and experimentation are not just beneficial; they’re essential. As a software engineer with a focus on distributed systems, particularly Apache Cassandra, I’ve taken this ethos to heart. My journey has led me to not only explore the intricacies of Cassandra’s distributed architecture but also to share my experiences and findings with a broader audience. This is why my YouTube channel has become an active platform where I stream at least once a week, engaging with viewers through coding sessions, trying new approaches, and benchmarking different Cassandra workloads.

Live Streaming On Tuesdays

As I promised in December, I redid my presentation from the Cassandra Summit 2023 on a live stream. You can check it out at the bottom of this post.

Going forward, I’ll be live-streaming on Tuesdays at 10AM Pacific on my YouTube channel.

Next week I’ll be taking a look at tlp-stress, which is used by the teams at some of the biggest Cassandra deployments in the world to benchmark their clusters. You can find that here.

Cassandra Summit Recap: Performance Tuning and Cassandra Training

Hello, friends in the Apache Cassandra community!

I recently had the pleasure of speaking at the Cassandra Summit in San Jose. Unfortunately, we ran into an issue with my screen refusing to cooperate with the projector, so my slides were pretty distorted and hard to read. While the talk is online, I think it would be better to have a version with the right slides as well as a little more time. I’ve decided to redo the entire talk via a live stream on YouTube. I’m scheduling this for 10am PST on Wednesday, January 17 on my YouTube channel. My original talk was done in 30 minute slot, this will be a full hour, giving plenty of time for Q&A.

Cassandra Summit, YouTube, and a Mailing List

I am thrilled to share some significant updates and exciting plans with my readers and the Cassandra community. As we draw closer to the end of the year, I’m preparing for an important speaking engagement and mapping out a year ahead filled with engaging and informative activities.

Cassandra Summit Presentation: Mastering Performance Tuning

I am honored to announce that I will be speaking at the upcoming Cassandra Summit. My talk, titled “Cassandra Performance Tuning Like You’ve Been Doing It for Ten Years,” is scheduled for December 13th, from 4:10 pm to 4:40 pm. This session aims to equip attendees with advanced insights and practical skills for optimizing Cassandra’s performance, drawing from a decade’s worth of experience in the field. Whether you’re new to Cassandra or a seasoned user, this talk will provide valuable insights to enhance your database management skills.